Step-by-Step Guide to Using Together AI with LLaMA 3 and CodeLlama for Python Code Generation

Large language models (LLMs) have revolutionized how we interact with technology, and Together AI brings the power of cutting-edge open-source models like LLaMA 3 and CodeLlama 34B Instruct to your fingertips. In this article, we’ll dive into what makes these models special, how Together AI provides value, and walk you through a practical use case: building a Python application from a natural language description.

Understanding the Building Blocks

What is LLaMA 3?

LLaMA 3 (Large Language Model Meta AI) is an advanced open-source language model developed by Meta. Its strengths include:

- Contextual Understanding: Processes complex natural language prompts with high accuracy.

- Architecture Design: Ideal for generating application plans, architectures, and designs.

- Open-Source: Freely available to developers, enabling flexibility without vendor lock-in.

What is CodeLlama 34B Instruct?

CodeLlama is a specialized version of LLaMA fine-tuned for programming tasks. The “34B” refers to its size in billions of parameters, making it highly capable for:

- Code Generation: Generates Python, JavaScript, and other programming languages.

- Problem Solving: Handles complex programming prompts and adapts to user needs.

- Clear Documentation: Outputs code with detailed comments for easy understanding.

What is Together AI?

Together AI is a platform that simplifies access to leading open-source models. With Together AI, you can:

- Use powerful LLMs like LLaMA 3 and CodeLlama via a single API.

- Avoid proprietary restrictions while leveraging state-of-the-art AI.

- Build applications at a fraction of the cost compared to commercial solutions.

The Use Case: Natural Language to Python Application

Imagine you’re a data analyst or developer who needs to process CSV files. Instead of manually writing the code, what if you could describe the application in plain English and get:

- A detailed architecture from LLaMA 3.

- Fully functional Python code generated by CodeLlama.

This pipeline transforms natural language into working software, saving time and effort while promoting collaboration between technical and non-technical users.

Step-by-Step Walkthrough of the Code

Here’s how you can build the natural language-to-code pipeline in Google Colab using Together AI.

1. Install and Set Up Together AI

First, ensure you have Together AI’s library installed and set up your API key.

# Install necessary libraries (uncomment if running for the first time)

# !pip install together

# Import necessary libraries

import os

from together import Together

# Set your Together AI API key

os.environ['TOGETHER_API_KEY'] = 'YOUR_API_KEY_HERE' # Replace 'YOUR_API_KEY_HERE' with your actual API key

# Initialize Together client

client = Together()

print("Together AI setup complete!")2. Define the LLaMA 3 Function

LLaMA 3 will take your natural language description and generate a detailed architecture and design for the application.

def get_architecture_with_llama3(description):

"""

Generate the architecture and design for a Python application based on a natural language description.

Parameters:

description (str): A plain-text description of the desired application.

Returns:

str: Architecture and design or an error message.

"""

try:

prompt = (

f"You are a software architect. Based on the following natural language description, "

f"design the architecture and provide a detailed plan for implementing the Python application. "

f"Focus on the structure, modules, and how the application should work.\n\n"

f"Description: {description}\n\n"

f"Architecture and Design:"

)

print(f"Generated Prompt for LLaMA 3:\n{prompt}\n")

# Send request to Together AI

response = client.chat.completions.create(

model="meta-llama/Meta-Llama-3-70B-Instruct-Turbo", # LLaMA 3 model

messages=[{"role": "user", "content": prompt}]

)

# Extract the generated architecture

architecture = response.choices[0].message.content.strip()

return architecture

except Exception as e:

return f"Error with LLaMA 3: {e}"3. Define the CodeLlama Function

Once you have the architecture, pass it to CodeLlama to generate Python code.

def generate_code_with_codellama(architecture):

"""

Generate Python code based on the provided architecture and design using the Meta CodeLlama model.

Parameters:

architecture (str): The architecture and design of the application.

Returns:

str: Generated Python code or an error message.

"""

try:

prompt = (

f"You are a Python programming assistant. Based on the following architecture and design, "

f"generate the Python code to implement the application. Ensure the code is clear, well-commented, "

f"and includes necessary imports.\n\n"

f"Architecture and Design: {architecture}\n\n"

f"Generated Python Code:"

)

print(f"Generated Prompt for CodeLlama:\n{prompt}\n")

# Send request to Together AI

response = client.chat.completions.create(

model="codellama/CodeLlama-34b-Instruct-hf", # Meta CodeLlama model

messages=[{"role": "user", "content": prompt}]

)

# Extract the generated code

generated_code = response.choices[0].message.content.strip()

return generated_code

except Exception as e:

return f"Error with CodeLlama: {e}"4. Run the Pipeline

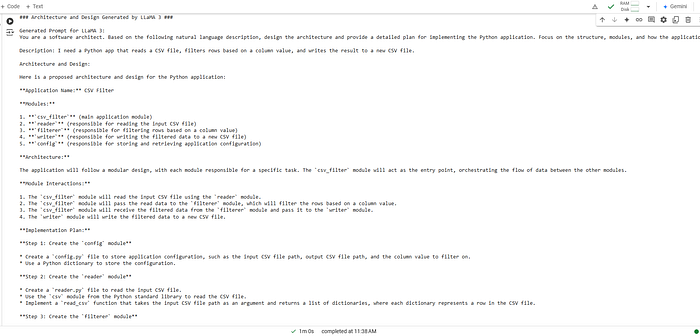

Provide a description of your desired application, and the pipeline will generate both the architecture and the code.

# Example usage

# Step 1: User submits a natural language description

description = "I need a Python app that reads a CSV file, filters rows based on a column value, and writes the result to a new CSV file."

# Step 2: Get the architecture and design from LLaMA 3

print("### Architecture and Design Generated by LLaMA 3 ###\n")

architecture = get_architecture_with_llama3(description)

print(architecture)

# Step 3: Use the architecture to generate Python code with CodeLlama

print("\n### Code Generated by Meta CodeLlama 34B Instruct ###\n")

generated_code = generate_code_with_codellama(architecture)

print(generated_code)

Why Together AI is a Game Changer

- Open-Source Models: Access powerful models without proprietary restrictions.

- Seamless Integration: Combine models like LLaMA 3 and CodeLlama for innovative applications.

- Cost-Effective: Build applications without expensive licensing fees.

Conclusion

You’ve just built a powerful pipeline that transforms natural language descriptions into Python code using Together AI. This approach saves time, bridges the gap between technical and non-technical users, and opens up endless possibilities for automation and innovation.

In a future article, we’ll explore how to integrate this pipeline into a user-friendly interface using Gradio, Streamlit, or Dash. Stay tuned! 🚀

Resources

- Together AI Demos

- Together AI Documentation

Let me know if you try this out or have any feedback!

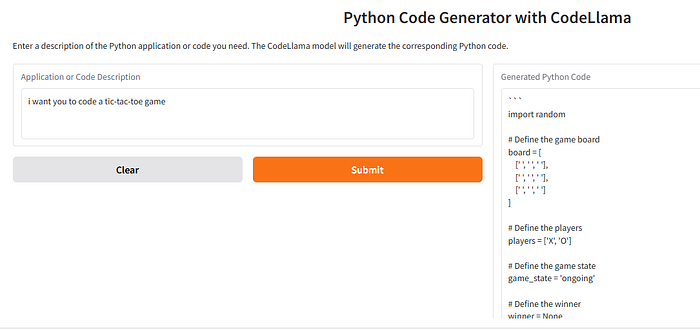

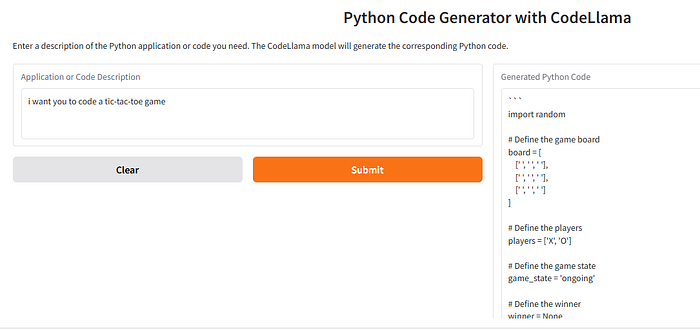

Addendum: Code User Interface with Gradio and CodeLlama for Python Code Generation

# Install necessary libraries (uncomment if running for the first time)

!pip install together gradio

# Import necessary libraries

import os

import gradio as gr

from together import Together

# Set your Together AI API key

os.environ['TOGETHER_API_KEY'] = 'YOUR_API_KEY_HERE' # Replace with your actual API key

# Initialize Together client

client = Together()

# Function to generate Python code using CodeLlama

def generate_code_with_codellama(description):

"""

Generate Python code based on a natural language description using CodeLlama.

Parameters:

description (str): A plain-text description of the desired Python code.

Returns:

str: Generated Python code or an error message.

"""

try:

prompt = (

f"You are a Python programming assistant. Based on the following description, "

f"generate the Python code. Ensure the code is clear, well-commented, and includes necessary imports.\n\n"

f"Description: {description}\n\n"

f"Generated Python Code:"

)

# Call Together AI

response = client.chat.completions.create(

model="codellama/CodeLlama-34b-Instruct-hf", # CodeLlama model

messages=[{"role": "user", "content": prompt}]

)

# Extract the generated code

generated_code = response.choices[0].message.content.strip()

return generated_code

except Exception as e:

return f"Error with CodeLlama: {e}"

# Define Gradio interface function

def gradio_generate_code(description):

"""

Gradio function to take user input and generate Python code.

Parameters:

description (str): User-provided description of the desired Python application or snippet.

Returns:

str: Generated Python code.

"""

if not description.strip():

return "Please provide a valid description."

# Generate code using CodeLlama

code = generate_code_with_codellama(description)

return code

# Create the Gradio interface

interface = gr.Interface(

fn=gradio_generate_code,

inputs=gr.Textbox(lines=3, placeholder="Describe the application or code you want", label="Application or Code Description"),

outputs=gr.Textbox(label="Generated Python Code"),

title="Python Code Generator with CodeLlama",

description="Enter a description of the Python application or code you need. The CodeLlama model will generate the corresponding Python code."

)

# Launch the Gradio app

interface.launch(share=True)How It Works

Input:

- Users enter a description, such as:

- “Write a Python function to calculate the sum of numbers in a list.”

- “Create a script to scrape headlines from a news website using BeautifulSoup.”

Processing:

- The description is passed to CodeLlama via the Together AI API.

- CodeLlama generates Python code based on the description.

Output:

- The generated Python code is displayed in a textbox.

Gradio Interface:

- Runs locally in Colab with a shareable public link.

- Allows users to interact with the app directly in the notebook.

How to Use This Code

Replace API Key:

- Replace

'YOUR_API_KEY_HERE'with your actual Together AI API key.

Run the Notebook:

- Copy the code block into a Colab notebook and run it.

Access the Interface:

- Once the Gradio app launches, you’ll get a shareable link. Use it to interact with the app or share it with others.

Test Use Cases:

- Input natural language descriptions to test the Python code generation.

Example Interaction

Input:

“Write a Python script to count the frequency of words in a text file.”

Output:

from collections import Counter

def word_frequency(file_path):

"""

Count the frequency of words in a text file.

Parameters:

file_path (str): Path to the text file.

Returns:

dict: A dictionary with words as keys and their frequencies as values.

"""

try:

with open(file_path, 'r') as file:

text = file.read()

words = text.split()

word_counts = Counter(words)

return dict(word_counts)

except Exception as e:

print(f"An error occurred: {e}")

# Example usage

file_path = "example.txt"

print(word_frequency(file_path))Why Use Gradio?

- Interactive UI: Simplifies the process of testing models.

- Accessible: Provides a shareable link for others to try the application.

- Customizable: Can be expanded to include additional input fields or outputs.

With this setup, you’ve turned a cutting-edge model like CodeLlama into an accessible and interactive Python code generator. 🚀